Auto deflating Event Hubs with a function app

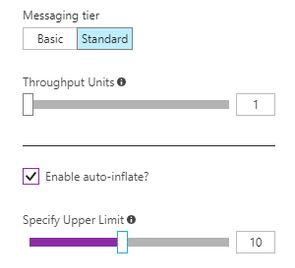

EventHubs have supported auto-inflate/scale-up for a while now, but don't come with an equivalent to auto deflate/scale-down. If your workload doesn't have sustained throughput requirements you'll probably benefit from periodically scaling back.

Assuming you allow your hub to inflate to 10 throughput units but most of the time you only need 1, at current pricing that represents an overpayment of $0.27/hour, or $2,300/year. Over multiple hubs (don't forget your dev/test instances!) it quickly adds up.

Doing this manually is possible (and right now the comments on the auto-inflate article suggest this is the way to go), though we can build and deploy a simple function app to take care of all of our event hubs periodically. The great thing about auto-inflate is that if we do scale back and the workload needs more throughput, it'll scale right back up again.

Where is the auto deflate checkbox?

Pre-requisites

In order to deploy the solution we'll need:

- Visual Studio 2017 with Azure Development workload (Community edition is fine)

- A app service to deploy a function app to

- A service principal which has owner permissions on the resource group(s) containing the namespaces we want to scale down

- Details of your tenant, subscription, and resource group(s)

Function App

The Azure documentation will take you through creating a function app if you don't already have one (which will create the required app service).

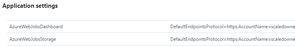

Once the function app is created grab the values for AzureWebJobsStorage and AzureWebJobsDashboard from the application settings (they're both the same value). These are needed for you to test your function locally.

Service Principal

To create the service principal we're going to use Azure Powershell, though you can also use the Azure portal.

The script below has been adapted from the Azure authentication for dotnet SDK article.

We're granting ourselves the right to modify a single resource group in the example below - if you want the app to modify Event Hub namespaces in multiple resource groups you need to grant the 'Scaler' app Owner rights on each resource group

$subscriptionName = "<your subscription name>"

$appDisplayName = "Scaler" # This can be anything you want

$appPassword = "<a strong password for your app>"

$resourceGroupName = "<resource group containing your event hub>"

Login-AzureRmAccount # You'll be prompted to login

Select-AzureRmSubscription -SubscriptionName $subscriptionName

$sp = New-AzureRmADServicePrincipal -DisplayName $appDisplayName -Password $appPassword

New-AzureRmRoleAssignment -ServicePrincipalName $sp.ApplicationId -RoleDefinitionName Owner -ResourceGroupName $resourceGroupName

$sp | Select DisplayName, ApplicationId # You'll need the ApplicationId laterWhile you're connected you can use the following command to get the values you need for TenantId and SubscriptionId:

Get-AzureRmSubscription | Select-Object SubscriptionId, TenantId, SubscriptionNameConfiguring the app for local testing

Clone the application from the ScaleDownEventHubs repository on GitHub.

Once cloned modify your local.settings.json file to look like the example file, using the values you acquired in the previous steps.

Your ClientId is the ApplicationId, and the ClientSecret is the password you used.

{

"IsEncrypted": false,

"Values": {

"AzureWebJobsStorage": "<your connection string>",

"AzureWebJobsDashboard": "<your connection string>",

"ClientId": "<your client id>",

"ClientSecret": "<your client secret>",

"TenantId": "<your tenant id>"

}

}You can now add one or more event hub namespaces to the function (in ScaleDown.cs).

var namespaces = new List<EventhubNamespace>

{

new EventhubNamespace("<subscription id>", "<resource group>", "<namespace>", 1)

};When the function runs it will use the credentials from your local.settings.json file to compare each namespace against the target capacity (the last argument - 1 in the example above), and if the namespace has a higher throughput it will reduce it.

In the portal you'll see reference to Throughput or Throughput Units - in the SDK this is captured by the Capacity property. For more information on the library we're using to manage the Event Hubs you can read the Event Hubs management libraries documentation.

To test this without making any changes you can comment out the update line, or set the capacity numbers to 20 (if the target capacity is higher than the current capacity it will not attempt to scale up - that is what auto-inflate is for!).

By default the function is set to run once a day at 01:30. For testing purposes you'll probably want to change it to run every minute.

// Default - daily at 01:30

public static void Run([TimerTrigger("0 30 1 * * *")]TimerInfo myTimer, TraceWriter log)

// For testing - every minute

public static void Run([TimerTrigger("* */1 * * * *")]TimerInfo myTimer, TraceWriter log)For more examples of how to specify the frequency see the timer documentation.

If you run the example locally you should see output similar to the below, where we can see our namespace is currently at 5 Throughput units, so we take no action (as the target was 20).

Deploying the app

Publishing the app from Visual Studio will only deploy the function - by default the settings won't get deployed. You can either use the function CLI to deploy your settings, or add them in the portal. The portal will already have the storage account settings, so the key settings you need to add are ClientId, TenantId and ClientSecret.

Once published the app will start executing on the timer you have specified.

You can monitor execution through the function app logs, Application Insights (if you add the required integration), or the Azure activity log. Every time the capacity of the namespace is changed there will be entries in the operational logs.